Illumination-aware Multi-task GANs for Foreground Segmentation

Abstract

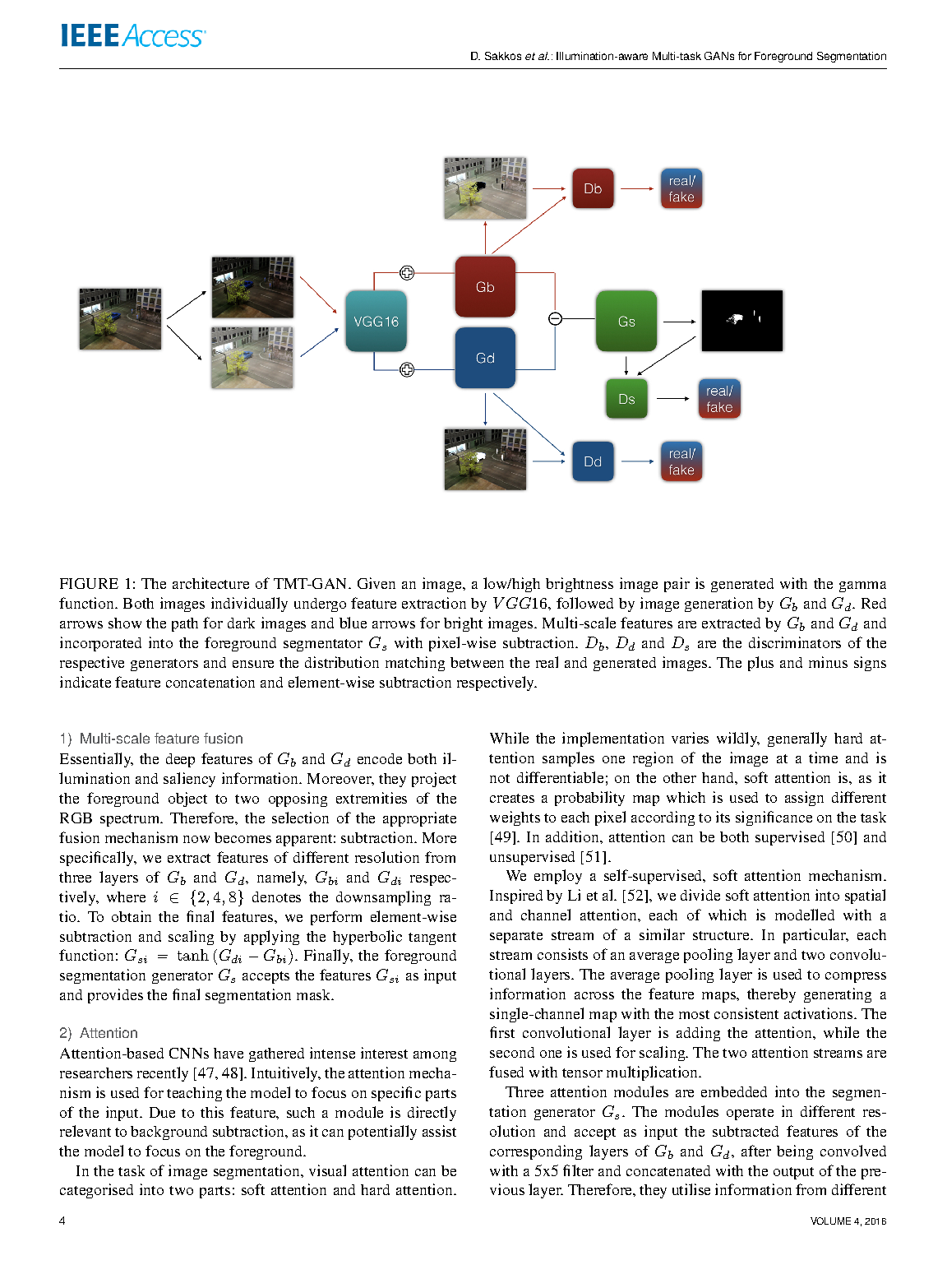

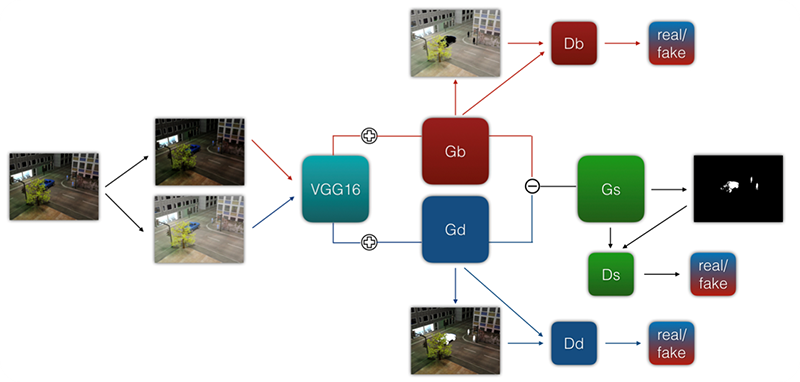

Foreground-background segmentation has been an active research area over the years. However, conventional models fail to produce accurate results when challenged with videos of challenging illumination conditions. In this paper, we present a robust model that allows accurately extracting the foreground even in exceptionally dark or bright scenes, as well as continuously varying illumination in a video sequence. This is accomplished by a triple multi-task generative adversarial network (TMT-GAN) that effectively models the semantic relationship between dark and bright images, and performs binary segmentation end-to-end. Our contribution is two-fold: First, we show that by jointly optimising the GAN loss and the segmentation loss, our network simultaneously learns both tasks that mutually benefit each other. Secondly, fusing features of images with varying illumination into the segmentation branch vastly improves the performance of the network. Comparative evaluations on highly challenging real and synthetic benchmark datasets (ESI, SABS) demonstrate the robustness of TMT-GAN and its superiority over state-of-the-art approaches.

Publication

Illumination-aware Multi-task GANs for Foreground Segmentation by Qianhui Men, Howard Leung, Edmond S. L. Ho and Hubert P. H. Shum in 2021

IEEE Access

Links and Downloads